In my article archive, I found a blog post by Steve Keifer of OpenText | GXS from a few years back entitled The End Of Mapping (In B2B Integration). Mr. Keifer states that maps are the most costly and most important part of a B2B Integration project. He goes on to say that with all the artificial intelligence technology that already exists, we should be able to eliminate traditional maps and automatically map data based on intelligence and historical data.

For example, a data element named FirstName in one format would automatically be mapped to first_name in another format. Assuming data quality, this would be obvious. What about a data element named PersonGivenName? This too would get mapped to first_name, but it isn't quite as obvious. Over time, the mapping application would learn to map PersonGivenName to first_name.

For example, a data element named FirstName in one format would automatically be mapped to first_name in another format. Assuming data quality, this would be obvious. What about a data element named PersonGivenName? This too would get mapped to first_name, but it isn't quite as obvious. Over time, the mapping application would learn to map PersonGivenName to first_name.

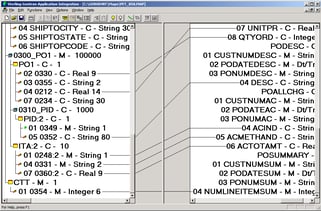

Since the blog post was about a year and half ago, I did a little research to see if any progress has been made to create artificial intelligence based mapping. I was unable to find anything quite to the extent discussed in the blog post, but there has been some movement towards making the mapping process less time consuming. In an effort to make mapping consistent and modularized, a few data mapping software developers have added the capability to create mapping components or pieces. These pieces are common rules or coding that can be referenced and used over and over again. Another advancement is creating a map based on the document structure and sample data. This would give you a "draft" map that you could refine, thus saving some of the initial mapping time.

Although it is not the end of data mapping, we've certainly come a long way since I first mapped in 1989.